Client

Government Digital Service

Year

2026

Brief

Explore AI for public services by understanding and translating real-world behaviours of trust into digital prototypes.

Keywords

Research, insight development, concept design, interactive prototyping

Packages

Frame - Discover - Imagine

Designing the Agentic State

We’ve been exploring how AI can feel more human for several years now, across consumer products, health, and enterprise.

In 2025 and 2026, we partnered with AI Studio at Government Digital Service (GDS) to ask a more radical question:

What if government services could act on your behalf?

Not just answering questions, but anticipating needs and navigating the messy complexity of real life.

Before designing anything digital, we set out to understand how trust and delegation already work between people.

We spent time with carers, advisers, teachers, birth doulas, charity workers, and informal support networks. We visited a Maggie’s Centre for cancer care, where staff deliberately delay action to create space for conversation. We attended Citizens Advice sessions, where advisers print out plans that people can reshape and take home.

Across all of this, we saw the same patterns emerge – subtle, human ways of making it feel safe to let someone else act on your behalf.

Together with the GDS team, we translated these behaviours into six design themes, and prototyped each one. Below are details of three of these themes we explored and prototyped together - more will be published in the coming weeks.

In 2025 and 2026, we partnered with AI Studio at Government Digital Service (GDS) to ask a more radical question:

Not just answering questions, but anticipating needs and navigating the messy complexity of real life.

Before designing anything digital, we set out to understand how trust and delegation already work between people.

We spent time with carers, advisers, teachers, birth doulas, charity workers, and informal support networks. We visited a Maggie’s Centre for cancer care, where staff deliberately delay action to create space for conversation. We attended Citizens Advice sessions, where advisers print out plans that people can reshape and take home.

Across all of this, we saw the same patterns emerge – subtle, human ways of making it feel safe to let someone else act on your behalf.

Together with the GDS team, we translated these behaviours into six design themes, and prototyped each one. Below are details of three of these themes we explored and prototyped together - more will be published in the coming weeks.

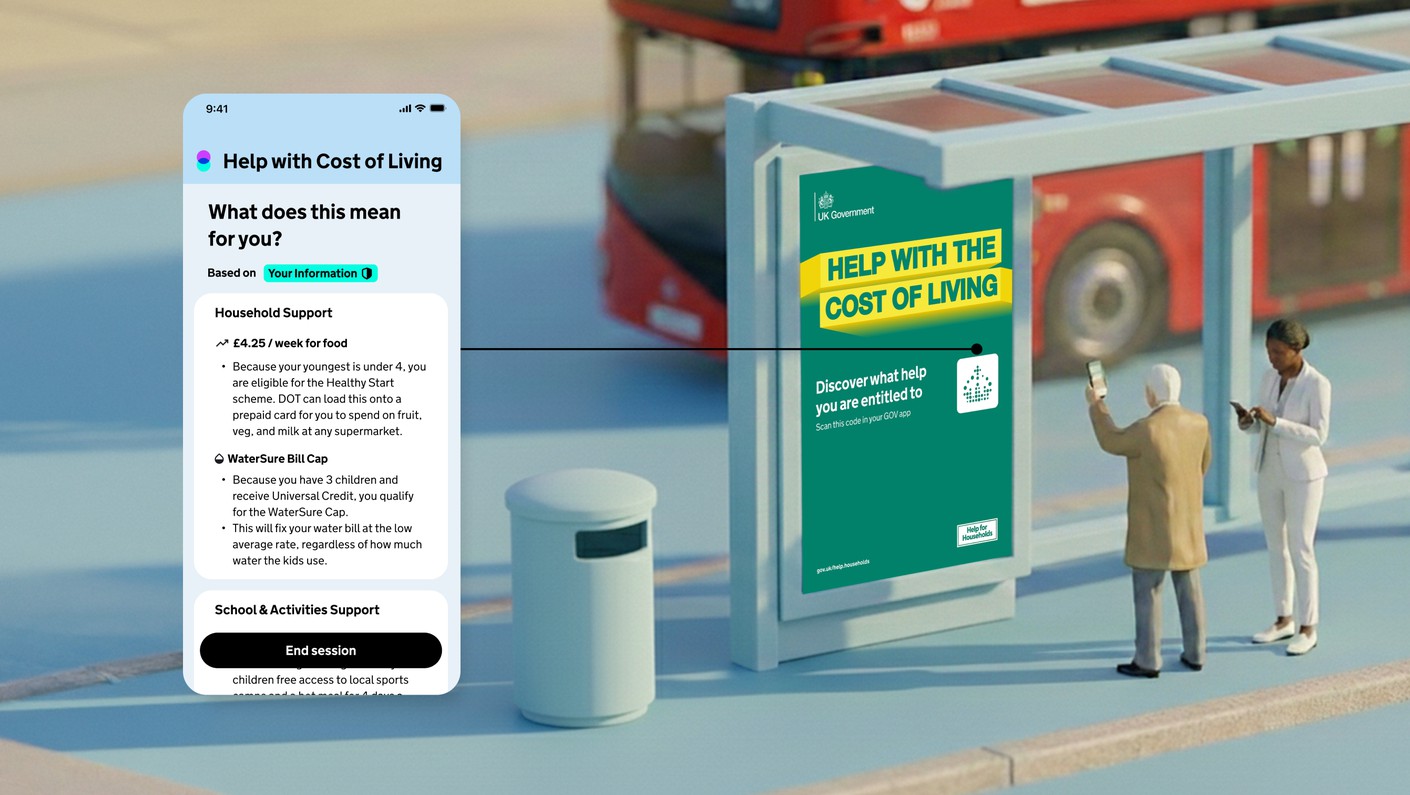

How might agents become findable?

In real life, people don’t start with government websites. They find help through trusted places, like a library, a GP surgery, or a friend who’s been through it before.

We explored what it would mean for AI agents to exist in those same spaces.

We designed a system of scannable markers, printed on letters, shared in group chats, and placed on noticeboards, that open a conversation relevant to your situation.

The idea was to create the kind of help that meets you where you already are, rather than asking you to go looking for it.

Read AI Studio at GDS's full post on this theme

In real life, people don’t start with government websites. They find help through trusted places, like a library, a GP surgery, or a friend who’s been through it before.

We explored what it would mean for AI agents to exist in those same spaces.

We designed a system of scannable markers, printed on letters, shared in group chats, and placed on noticeboards, that open a conversation relevant to your situation.

The idea was to create the kind of help that meets you where you already are, rather than asking you to go looking for it.

Read AI Studio at GDS's full post on this theme

How might agents make their work visible?

When someone helps you in real life, you can see what they’re doing. They sketch things out, talk through options, and check before they act.

We brought that same principle into AI.

We prototyped interfaces that show what an agent plans to do, what it needs from you, and what the consequences are. Building trust means turning every action into something you can inspect, pause, or refuse.

Read AI Studio at GDS's full post on this theme

When someone helps you in real life, you can see what they’re doing. They sketch things out, talk through options, and check before they act.

We brought that same principle into AI.

We prototyped interfaces that show what an agent plans to do, what it needs from you, and what the consequences are. Building trust means turning every action into something you can inspect, pause, or refuse.

Read AI Studio at GDS's full post on this theme

How might AI agents separate thinking from doing?

One of the clearest findings from our research was thatpeople stop asking for help when action feels immediate or irreversible. So we designed a clear separation between thinking and doing.

What did this look like in practice? Private spaces to explore “what if” scenarios, without triggering anything. A visible, deliberate step when exploration turns into action.

Read AI Studio at GDS's full post on this theme

One of the clearest findings from our research was that

What did this look like in practice? Private spaces to explore “what if” scenarios, without triggering anything. A visible, deliberate step when exploration turns into action.

Read AI Studio at GDS's full post on this theme

“Special Projects were dream collaborators. They brought curiosity, creativity and technical depth to a complex space of helping people interact with government. Their discovery process was a highlight: the research felt alive, and the insights it brought out were unexpected and illuminating.”

Kuba Bartwicki

Head of Design, Products and Services at Government Digital Service

Head of Design, Products and Services at Government Digital Service

The brief asked us to explore AI for public services.

What we actually explored was something more fundamental:

What makes people willing to let something act on their behalf – and what breaks that trust.

The more we work in this space, the clearer it becomes that the real design material isn’t the technology. It’s the relationship between a person and whatever is acting for them.

What we actually explored was something more fundamental:

The more we work in this space, the clearer it becomes that the real design material isn’t the technology. It’s the relationship between a person and whatever is acting for them.